When is Web Scraping the Answer? The Top 7 Use Cases for Data Extraction

Despite its rise in popularity, web scraping is still an obscure topic for many, and that’s a pity. Plenty of businesses and individuals have much to gain by gathering and analyzing all the data publicly available on the Internet.

I’m willing to bet that you, dear reader, could benefit from some well-planned web scraping.

In this article, I want us to go over some of the more common use cases for data extraction tools. Chances are, you’ll get to learn of new uses you haven’t thought about until now.

But first, let’s put things into perspective by diving into how web scraping has helped build the Internet we know today.

Under the hood of a web scraper

Data extraction may sound like a new concept, but it really isn’t. Web scrapers are constantly evolving to keep up with the development of anti-bot tools, but the idea itself is almost as old as the Internet.

Back when search engines weren’t a thing, it was a painstaking process to actually find the site or information you wanted. So, people started saving the data they needed, rather than sifting through the early Internet again and again. In that time, coders started building the first web scrapers, to save on the time used to download the data.

Then search engines came around and did much of the same thing, but on a much grander scale. Engines like Google and Yahoo send crawlers all over the net to identify websites and get some basic info on what kind of content these sites have. Then, they use a different tool to scrape and analyze all the data. After they know what pages a website has and what’s on them, they can generate the search engine results pages for different keywords, showing sites that match the query.

The data extraction practice is actually a fundamental process of how the modern web works, since it enables us, the users, to easily find whatever content we want.

Now, whether you use software, a browser extension, a handy API, or just build your own scraper, you’re doing much of the same. The tool receives one or more URLs, goes to the pages, loads all the HTML code, and then extracts it. You could want all the code, but it’s more likely that you’ll want a specific part, like prices on an eCommerce site or emails on a contact page.

And so, we’re back to the “meat” of the article: how to use a web scraper for the benefit of your business.

1. Modernizing the real estate industry with accessible, actionable data

The real estate industry as a whole has seen a big improvement with the use of web scraping.

Prime, commonly known examples are property listing websites. These platforms gather offers from all over the Internet via web scraping and present all of them in the same place.

Individuals or businesses can find all opportunities in one place, instead of browsing sites from individual realtors or having to rely on an agent to bring them a catalog.

Most of these aggregator sites have crawlers and scrapers working around the clock. Their offers don’t have to be manually added by a person, as a script automatically uploads the data. But the benefits don’t stop at some quality-of-life improvements. Data extraction really shines at large-scale projects, where there are tons of information to collect and process. The real estate industry is precisely that kind of environment.

Both investors and realtors need to know in which direction the market is going, both in a general sense and in specific locations (cities or even neighborhoods). To know that, you have to have data on as many properties as possible. We’re talking about property valuations, asking prices, vacancy rates, sales cycles, and so on. Once collected and processed, all that data proves vital in decisions like when and where to buy, sell, rent, and so on.

Real estate unicorn Opendoor is a good example of how web scraping can make a huge impact in the industry. Their business model is to buy properties from homeowners, make changes to raise the property value, and then sell them off for a net profit. The key factor is the return on investment in these transactions. Knowing the market well, Opendoor knows just how much to offer homeowners and then how much to ask new buyers in order to make a profit as the middle-man.

As a bonus, realtors can also use web scraping to keep an eye on their competitors. It’s a crowded, competitive market, so knowing the price ranges of different companies, realtors can win customers over with smaller prices.

2. Market analysis for all

Real estate isn’t the only industry where it pays to know the market and the competition. In fact, this info is valuable to any business that wants to get a leg up.

Let’s say that you want to launch a product that can be sold both in-store and online. We’ll take a coffee machine as an example, mainly because I’m craving a coffee as I’m writing this article.

You’ll need to know the price ranges of different coffee machines, as well as their features and type of coffee used. Since there are a lot of models and marketplaces to check, you opt to use web scraping to speed up the process.

So, you extract data from the websites of top coffee machine manufacturers, then you do the same on Amazon and Walmart, and a few other big eCommerce websites. You gather info such as prices, features, and reviews.

After centralizing the data, you can calculate the mean price for all products that have a specific feature. You can approximate customer interest on specific features, stores, and brands thanks to the reviews. In short, you have all the knowledge you need to prepare a go-to-market strategy.

After the launch, you can also use web scraping to monitor what the competition is doing. If they launch a new model or offer special prices, you’ll get an early heads-up and take action accordingly.

Another useful action is checking how distributors and resellers are presenting your product online. It’s crucial that all your product data is correct and put in a favorable light.

This kind of process can be applied to just about any product or service. As web scraping excels at large-scale data extraction projects, mainstream industries are favored over niche ones, since the volume of data is much greater.

3. Extra power to the buyer

Let’s stay on the subject of product price scraping for a bit longer. After all, it’s not a subject of interest only for sellers, but buyers as well.

Whether you’re a computer manufacturing enterprise or a high school student interested in sneakers, one thing remains constant: you always want to buy what you need at the lowest price possible.

Since we already discussed how web scraping does an excellent job of gathering price information, we’ll focus on ways it can be used.

For businesses that need plenty of materials, for example, a retail store or a construction company, stock prices are crucial for growth. By keeping a keen eye on prices from all vendors, these businesses can ensure that they always buy at the lowest price and always spot special deals.

For individuals, high-price, low-stock goods, like designer clothes or certain car models present the same problem. Of course, web scraping presents the same solution. By monitoring prices and supplies, people can get the best deals on the hard-to-find products they want.

4. More leads in less time

A business that sells common goods can find customers by stocking store shelves, selling online, using social media, and placing ads. Of course, there are plenty of other ways but these are some of the main methods.

For a niche product or service though, the strategies mentioned above might not work. If you’re selling something that only useful at a certain point in time or only to a specific type of customer, you’ll face problems such as:

- There aren’t specialty stores for your product or it gets little attention in department stores;

- The product has a low search volume online, so the company website or eCommerce sites don’t get traffic;

- The target audience may be highly dispersed or present little interest in the product outside of its uses, making it uninteresting on social media;

- The target audience is small, so ads (online or otherwise) present little return on investment.

In such cases, you’ll most likely have to turn to outbound marketing strategies to generate leads.

There’s always the option of buying lead lists from specialized companies. These often even prepare an introduction for you and each lead. But, these services can be expensive. Web scraping is often a more cost-effective way of getting the same results.

Let’s say that you want to do business with the realtors we mentioned earlier. A good place to start is on a listing aggregation site. Just scrape the pages for the contact information of each agent and the business information of the agency they’re part of. Another good option is to go straight to a list of real estate agencies in a specific city or region. The process is the same.

Then you can go to each company’s website and scrape for contact information there too. Once you’re done, you’re sitting on a complete list of possible clients, as well as addresses, contact methods, and employee information.

This strategy works in just about any industry. The only things that change are the sites you should scrape for the much-needed information.

5. Brand perception — demystified

Any company worth their salt is mindful of the way their brand is viewed. While customers’ and leads’ perception could be considered the most important, all stakeholders matter. That includes investors, providers, employees, industry experts or influencers, and the public as a whole.

The challenge is keeping your finger on the pulse when people may be chatting privately, on forums, and on social media groups about your business. In short, having eyes and ears everywhere is next to impossible.

Still, web scraping can become a major step up in brand monitoring.

Here are just a few examples of pages that hold valuable knowledge for marketers and branding specialists:

- SERPs with the brand or product/service as the search term

- Online forums centered around the brand, product, service, or industry

- Social media accounts and groups with the same focus

- Reviews on specialized websites (including Google Reviews)

With all that data, you just have to search for your brand or product name to see what people are saying. It’s an excellent bridge between quantitative and qualitative analysis.

Through this process, businesses gain precious insight for actions like rebranding or PR campaigns, special offers, or entering new markets.

Gathering data on competing brands also helps you measure important differentiators, analyze what advantages each side has and take action accordingly.

6. Supercharged machine learning

One of the most inspiring and talked-about developments in the tech industry is machine learning. Truly, AI-powered solutions can and most likely will have a huge impact on many areas in the coming years.

But, machine learning is far from easy. The basic principle is that developers have to train their algorithms to effectively operate on their own. For that, they need large amounts of high-quality sample data.

For example, if you want to train an AI to recognize traffic lights and be able to distinguish correctly which light is currently on. To do that, you’ll need a huge library of photos (both with traffic lights in them and without) to help the AI learn the distinctions.

In that case, instead of manually going through pages upon pages of traffic light photos in Google Images and stock photos, you have a web scraper do it. You can even use a filter that makes sure that the scraper only downloads images that have the term “traffic light” in their meta description.

It’s the same thing for text or other forms of content as well. You just have to direct the scraper in the right direction, validate the data for safety, and feed it to the algorithm.

The particular details of the data extraction process depend only on the particular details of the machine learning project you’re working on.

7. Data-based recruitment

Attracting top talent to your company is rarely easy. Even if your business has a great reputation and offers top-tier benefits, recruiters’ jobs are rarely easy.

One way to make work easier is to leverage extracted data to better understand the job market. For example, if you’re on the lookout for a product designer, the first step would be to find other offers for the same position. Visit job portals, scrape the relevant data and add it all together to a single file.

Take a look at the company names in that file. Those are your competition because they’re looking for the same people as you. Examine the info, like offered wages, benefits, job descriptions, and so on and you’ll know what you have to beat to get the best and brightest in your team.

This recruiting strategy is especially useful for large corporations, who can afford to hire people in different locations, based on where they’re most likely to find top talent.

On the same topic, you can go headhunting on your own by scraping Linkedin or other sites that host professional profiles. You just execute a search based on the desired traits and get the data, nice and fast. The process is legal, as there has been a court case to decide if such actions should be prohibited but the court ruled in favor of freely scraping public profiles.

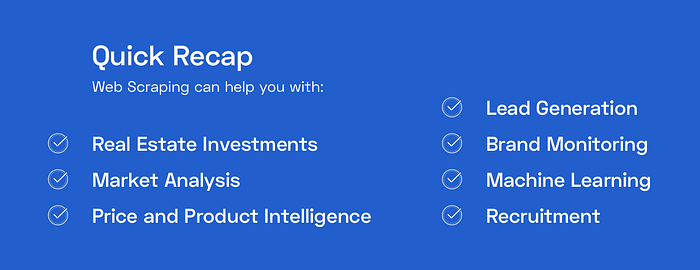

In conclusion, is web scraping the sole path to success?

Obviously not. Sensationalist headers aside, web scraping can be a powerful tool in the hands of both businesses and individuals, but it’s not perfect.

For starters, many data extraction tools require programming knowledge. Not all of them, but your options are somewhat limited if you don’t know your way around code.

Moreover, web scraping can be expensive. Plenty of service providers offer trials or free versions. But don’t expect to scrape en masse for free.

Lastly, there are certain situations where scraping can risk you a lawsuit. If a website disallows automated data extraction and a scraper still does it, they are officially outside the law. Remember to read and respect the Robots.txt file on websites before scraping.

Everything has advantages and disadvantages. Web scraping is no different. But, in the right hands and with the right plan, there’s no denying its effectiveness.

I hope that I got you interested in data extraction and that you’ll give it a try. Now, I’d recommend you take a look at the different products the web scraping market has to offer. Don’t know where to start? Here’s a handy list of the top 10 web scraping APIs you should check out.